ABSTRACT:

This informal presentation will focus on three aspects of current virtual acoustic modelling research currently active at the University of York Audio Lab – questions from the audience and discussions are encouraged throughout!

Developments in measuring the acoustic characteristics of concert halls and opera houses are leading to standardised methods of impulse response capture for a wide variety of auralisation applications. This work has been extended as part of a recent survey of selected archaeological sites in the UK, each demonstrating some feature of interest in terms of its acoustic characteristics. An overview of this survey is presented together with plans for future directions that this work might take.

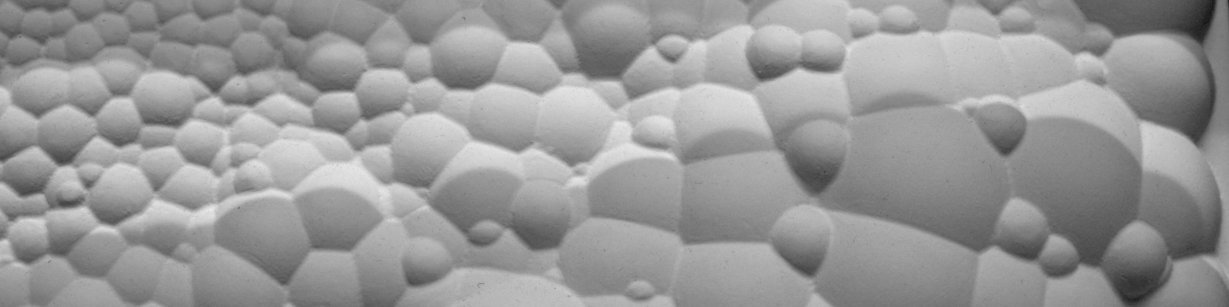

The digital waveguide mesh has been shown to be appropriate for accurately modelling the acoustic characteristics of a resonant object, including 3-D acoustic spaces. RoomWeaver is an application that has been developed to provide a robust framework for waveguide mesh based room acoustics modelling and simulation and is capable of producing high quality room impulse responses suitable for auralisation. An overview of the system is presented highlighting recent developments and results.

The methods employed in modelling a space and measuring the results obtained are applicable for virtual spaces of any size. This has led us to apply multi-dimensional modelling techniques to the simulation of the resonant characteristics of the vocal tract with a view to developing a new method of articulatory speech or singing synthesis. Is this approach a viable option? Results to date, based on MRI scans of the vocal tract, are demonstrated, and methods for taking a static digital waveguide mesh simulation and developing it into a dynamic model suitable for real-time synthesis are discussed.

Damian Murphy: "Virtual Acoustics – From Space to Voice"

Damian Murphy is a researcher at the University of York Audio Lab, and visiting researcher at CIRMMT.

May 01, 2008

16:30 - 18:00